Affective computing is the expanding intersection between technology and emotion. It is characterized by the detection of and response to human feelings.

The term Affective Computing originates in the 1990s with Rosalind Picard's paper and book of that title. Picard takes a broad view of the field and includes several far-reaching philosophical and technical issues. Not surprisingly, there are significant ethical issues too. For example, if a system can detect your emotional state, how widely should that information be available? Does it belong to you? Some issues are like those with facial recognition, which is still very controversial.

Practical Applications of Affective Computing

Affective computing is alive and well, albeit nascent. It has promising applications in problem domains where face-to-face contact is less available. Remote learning and healthcare are two areas where responding to users’ emotional states could considerably enhance the effectiveness of interactions. With an aging population and severe shortages of trained staff, healthcare may be a vital problem domain for the burgeoning field of applied affective computing (AAC).

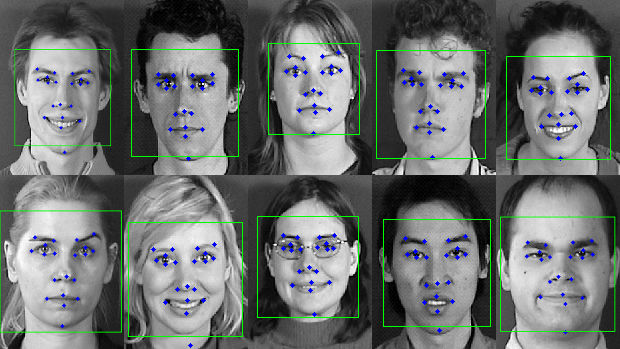

As the name suggests, AAC focuses on the practical aspects of detecting and responding to human emotions. It is a complex mix of “psychology, AI, Human-Computer Interaction (HCI), robotics, engineering, social science, and medical science.” (See Applied Affective Computing below.) Artificial Intelligence, in particular, has an increasingly vital role, but effective sensors and algorithms for identifying emotions are also needed. Facial responses are critical for many emotions and feature detection, as illustrated below, is a necessary stepping stone.

Detection of Static Geometric Facial Features

Intelligent Behaviour Understanding Group (iBUG), Department of Computing, Imperial College London. Fair Use.

AAC promises to be an exciting field. Although the name itself was coined only in the late 2010s, AAC systems already perform better than humans in recognizing some emotions, especially in audio-visual emotion classification.

Emotions

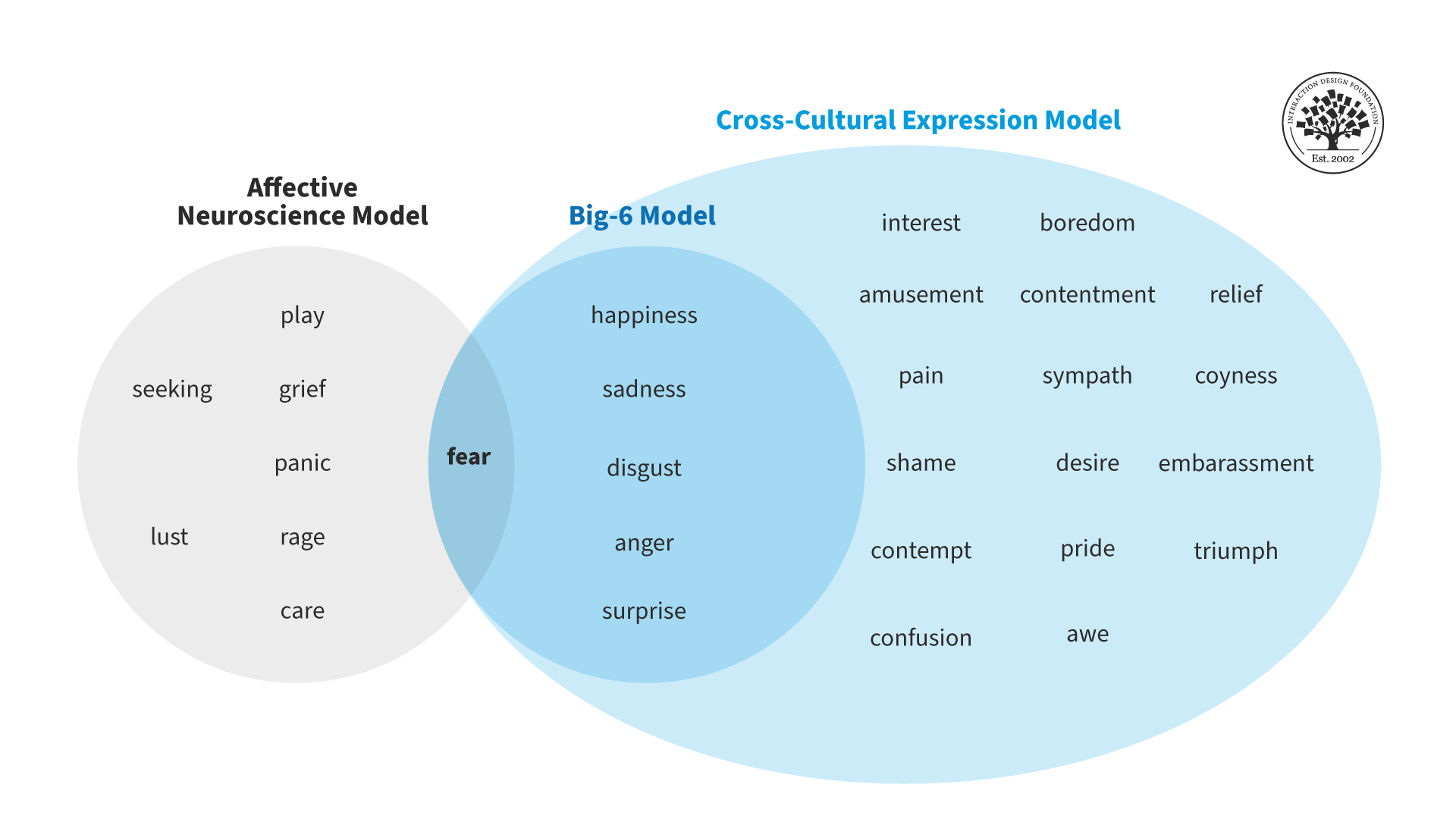

Human emotions are central to affective computing. Charles Darwin was one of the earliest authors on emotions. He was instrumental in their study through his 1872 book, The Expression of the Emotions in Man and Animals. Paul Ekman, among others, credits Darwin for being the creator of modern psychology. Ekman was responsible for the “big-6” model of basic emotion theory later in the 20th century, identifying them as:

Fear

Anger

Joy

Sadness

Disgust

Surprise

Darwin’s universality hypothesis proposed that all humans convey these emotions through facial expressions, but recent evidence shows this is not necessarily true (see Jack et al. in the references below). Further study in this area has resulted in a cross-cultural expression model:

Adapted from Applied Affective Computing, Tian et al.

On the technical side, it may be some time before we can develop fully emotion-aware systems. Psychologists divide emotions into two categories. Primary emotions are basic “animal” responses from our primitive brains (the brain stem and limbic system). These are alarm responses that lead to immediate physiological reactions. They can be readily detected. Secondary emotions are more considered and potentially complex. Some authors suggest that they ultimately produce physiological responses, but others disagree. There are doubts that secondary emotions can be detected, particularly in identifying mixed emotions and their component causes. For example, you might be sad that a pet has died but relieved that it is no longer suffering. (See the Sloman and Damasio references below.)